The number of datasets to overlap can be specified by the cycle_length argument, while the level of parallelism can be specified by the num_parallel_calls argument. To mitigate the impact of the various data extraction overheads, the tf. transformation can be used to parallelize the data loading step, interleaving the contents of other datasets (such as data file This overhead is present irrespective of whether the data is stored locally or remotely, but can be worse in the remote case if data is not prefetched effectively. protobuf), which requires additional computation. In addition, once the raw bytes are loaded into memory, it may also be necessary to deserialize and/or decrypt the data (e.g. Read throughput: While remote storage typically offers large aggregate bandwidth, reading a single file might only be able to utilize a small fraction of this bandwidth.Time-to-first-byte: Reading the first byte of a file from remote storage can take orders of magnitude longer than from local storage.In a real-world setting, the input data may be stored remotely (for example, on Google Cloud Storage or HDFS).Ī dataset pipeline that works well when reading data locally might become bottlenecked on I/O when reading data remotely because of the following differences between local and remote storage: Now, as the data execution time plot shows, while the training step is running for sample 0, the input pipeline is reading the data for the sample 1, and so on. Note that the prefetch transformation provides benefits any time there is an opportunity to overlap the work of a "producer" with the work of a "consumer." benchmark( Tf.data runtime to tune the value dynamically at runtime. You could either manually tune this value, or set it to tf.data.AUTOTUNE, which will prompt the The number of elements to prefetch should be equal to (or possibly greater than) the number of batches consumed by a single training step. In particular, the transformation uses a background thread and an internal buffer to prefetch elements from the input dataset ahead of the time they are requested. It can be used to decouple the time when data is produced from the time when data is consumed. The tf.data API provides the tf. transformation. While the model is executing training step s, the input pipeline is reading the data for step s+1.ĭoing so reduces the step time to the maximum (as opposed to the sum) of the training and the time it takes to extract the data. Prefetching overlaps the preprocessing and model execution of a training step. The next sections build on this input pipeline, illustrating best practices for designing performant TensorFlow input pipelines. The training step time is thus the sum of opening, reading and training times. However, in a naive synchronous implementation like here, while your pipeline is fetching the data, your model is sitting idle.Ĭonversely, while your model is training, the input pipeline is sitting idle. Opening a file if it hasn't been opened yet.The plot shows that performing a training step involves:

Under the hood, this is how your execution time was spent: Start with a naive pipeline using no tricks, iterating over the dataset as-is. To exhibit how performance can be optimized, you will improve the performance of the ArtificialDataset. Print("Execution time:", time.perf_counter() - start_time) Next, write a dummy training loop that measures how long it takes to iterate over a dataset. This dataset is similar to the tf. one, adding a fixed delay at the beginning of and in-between each sample. Output_signature = tf.TensorSpec(shape = (1,), dtype = tf.int64), # Reading data (line, record) from the file

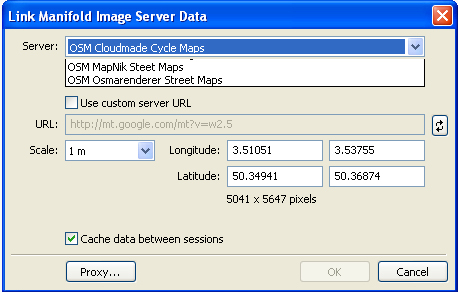

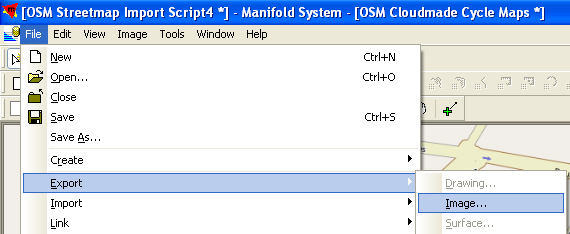

Oziexplorer map cache limit how to#

This document demonstrates how to use the tf.data API to build highly performant TensorFlow input pipelines.īefore you continue, check the Build TensorFlow input pipelines guide to learn how to use the tf.data API. The tf.data API helps to build flexible and efficient input pipelines.

GPUs and TPUs can radically reduce the time required to execute a single training step.Īchieving peak performance requires an efficient input pipeline that delivers data for the next step before the current step has finished.